The huge Milner prizes for nine well-known scientists, and the controversy they generated, have motivated me to relate a story. It happened during the theorist/experimentalist workshop that was held in early August (see also here) at the Perimeter Institute in Waterloo, Canada. And it illuminates something that many scientists, science commentators and science journalists, as well as science fans in the public, seem to be unaware of, but ought to know.

Before I start, I want to make one thing clear. I am by no means a flag waver for the string theory community; the theory’s been spectacularly over-hyped, and the community’s political control of high-energy physics in many U.S. physics departments has negatively impacted many scientific careers, including my own. On the other hand, I am also not going to tell you that string theory, as a theory, is somehow evil incarnate; I have done a certain amount of string theory research, and not only have I learned a great deal from it that I could not have learned any other way, doing the research had a positive effect on my career. So I feel it is unfortunate that string theory has been a political football, with two violent teams trying to kick the ball toward their opponents’ goal posts. From my perspective, the game is irrational and preposterous, reasonable people were long ago refusing to play it, and it is high time the ball were grabbed by the referee and placed quietly in the middle of the field where it belongs.

My story takes place on the evening of Friday, August 3rd, following the second day of the workshop, which brought together theorists and CMS experimentalists for discussions concerning research strategies at the Large Hadron Collider [LHC]. (CMS and ATLAS are the two general purpose experiments at the LHC, and their co-discovery of a Higgs-like particle was announced July 4th.) I was sitting at a square white table on the Perimeter Institute’s ground floor, illuminated by sunset light pouring in through the Institute’s plate-glass windows. Aside from me, those at the dinner table included six members of the CMS experiment, among them Joe Incandela, the current spokesman of CMS, who a month before had the great privilege of presenting CMS’s new discovery to the world. The eighth person at the table, sitting to my left between me and Incandela, was a theorist, David Kosower, an American working as a senior professor in France, at Saclay.

Discussion ranged widely, but at some point Incandela began describing how important the work of Kosower and his colleagues in the BlackHat collaboration had been in helping confirm the validity of a measurement technique that Incandela’s group (his postdoctoral researchers and students, and perhaps some of his faculty colleagues as well) had been trying to employ. This technique formed a crucial part of their effort to search at CMS for signs of new undetectable particles [i.e., particles that, like neutrinos, pass through CMS (and ATLAS) without leaving any trace]. (This is often billed as a “search for supersymmetry”, but in fact is a much more general way to look for many types of new particles that would be essentially invisible to CMS.)

Now, what is “BlackHat?” The Standard Model, the set of equations we use to describe all the known particles and non-gravitational forces in nature, works very well for predicting the processes that occur at the LHC — as far as we can tell. A crucial limiting factor in our ability to tell, however, is our ability to calculate. Many processes that we observe occurring at the LHC are quite complicated, and the rates at which such processes take place often cannot be calculated, currently, to better than 50% precision or worse. This means that if a new phenomenon were causing a certain process to occur 20% more often than in the Standard Model, it is quite possible that we would not yet know it, due to an imprecise Standard Model prediction. Over the years, theorists like Kosower and his colleagues, and their competitors pursuing other approaches, have gradually been calculating more and more complex processes at the precision levels needed for top-notch measurements at the LHC. And BlackHat is the computer program that Kosower and his friends have written to translate all of their theoretical insights and methods into actual predictions for the LHC experimentalists to use.

What is truly remarkable is that today BlackHat and its competitors can do calculations that were once thought, as recently as 2005 or so, would lie far beyond the reach of theorists during the entire LHC era. What happened? Well, there was a revolution in calculational techniques… and it has allowed measurements such as that carried out by Incandela and his group to be significantly more precise, in turn allowing us to know much more about what is present, and absent, in the data produced by the LHC.

The revolution, at least as far as BlackHat specifically is concerned, actually had two stages. It started in the 90s, when various new techniques allowed theorists sometimes to abandon the famous but extremely awkward method of Feynman diagrams for calculations. Many of these techniques were developed by Kosower along with Lance Dixon (professor at the SLAC laboratory outside Stanford University, where I was a graduate student), Zvi Bern (now a professor at the University of California at Los Angeles [UCLA]) and David Dunbar (now a professor at Swansea University in the UK.)

But there was another key advance that occurred in the middle of the last decade. If you ask Bern, Kosower or Dixon, which you can do in part by reading their Scientific American article on BlackHat from May, 2012, they will tell you that one of the key developments was a technique called “on-shell recursion”. What this term means is very technical. Where it comes from is fascinating.

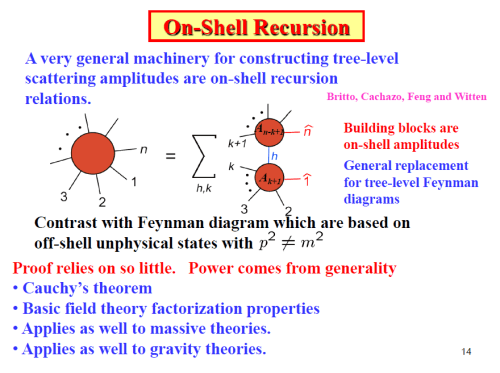

Here’s a slide from one of Bern’s recent talks, given in 2011 at the Institute for Nuclear Theory at the University of Washington.

You see that he refers to this technique as “a very general machinery” whose “power comes from [its] generality”. And he cites a paper, whose authors are Ruth Britto, Freddy Cachazo, Bo Feng and Ed Witten — three young people collaborating with the famed string theory grandmaster, winner of the Milner Prize and the Fields Medal, among many other awards. (The youngsters were then postdoctoral researchers working mainly on string theory at the Institute for Advanced Study in Princeton, where Witten and three other Milner prize-winners are faculty. Today Cachazo is a professor at the Perimeter Institute, Britto is a professor at Saclay, and Feng is a professor at Zhejiang University in China. Sadly, none of them took jobs in the United States.)

And Bern goes on: here’s a later slide, where he lists some key developments.

Second among them is the “realization of the remarkable power of complex momenta in generalized cuts” in another paper of Britto, Cachazo and Feng, which draws its “Inspiration from Witten’s twistor string paper.”

Bern is referring to Witten’s 2003 paper entitled “Perturbative Gauge Theory As a String Theory in Twistor Space.” What is that all about?

“Perturbative Gauge Theory” refers to a class of quantum field theories of which the Standard Model itself (in the context of the LHC) is an example; Witten was studying a simpler one, the so-called “maximally supersymmetric gauge theory”, which is the spherical cow [i.e. the simplest case — simplistic in some ways, but much easier to study] of perturbative gauge theories. “String Theory” is just what you think it is, and “Twistor Space” — well, whatever it is (and I won’t try to explain it here) it’s not the real world.

It was a remarkable paper, as Witten’s so often have been, drawing together numerous theoretical ideas (including some that played a role in the above-mentioned 1990s calculational revolution) into one unexpected place, and showing how rich their implications could be. But Witten’s paper had nothing directly to do with calculations relevant to the LHC; it had to do with using string theory in a weird and little-studied context to carry out calculations in the vastly simpler case of the maximally supersymmetric gauge theory. The whole thing lies, it would seem, infinitely far from experiments.

Yet the results Witten obtained led to the Britto, Cachazo, and Feng papers, including the one written with Witten himself, which managed to pull from the string theory developments some key insights that were needed for more general calculations. From there we follow the results to BlackHat, whose leaders all did some amount of string theory early in their careers but who turned by choice to practical calculations and invented many new techniques themselves. They’re exactly the sort of people you’d expect to dismiss these efforts by string theorists as naive. But no. They credit Britto et al. prominently for a key insight that makes BlackHat possible. And finally we arrive back at the dinner table, with me listening to Joe Incandela, who, fresh from the completion of the CMS paper on the observation of the Higgs-like particle, is praising BlackHat for its contribution to searches for new phenomena in CMS data. It’s only a few steps from Incandela to Witten — from experiment to the most apparently-useless edge of string theory.

So it’s unfair to Witten, when he is given a prize for some reason or other, to denigrate his theoretical work as something that cannot be tested experimentally — as though being “testable” were the only possible criterion for determining whether theoretical work has value for physics.

Here’s a theory that’s false as a theory of the world: a theory of only gluons, the particles that are associated with the strong nuclear force in the same way that photons are associated with the electromagnetic force. (This theory is often called “pure Yang-Mills theory.”) Obviously it does not apply to reality; the proton is made from quarks and antiquarks and gluons, not from gluons alone. Yet the study of this theory, both numerically using computers and conceptually, has given significant insight into how non-perturbative gauge theory works. Since non-perturbative gauge theory is what forms protons and neutrons, these insights have been helpful as a step along a longer road to develop better calculational tools in the non-perturbative context. So even though pure Yang-Mills theory is not a theory of nature, nevertheless yet we find it useful to study it very carefully.

The same standards should be applied to string theory. It may or may not be, as its hype-sters suggest, the “theory of everything” (a glib moniker that actually means “a single, unified theory of all the elementary forces and particles of nature, including the known particles and forces and also [at least] dark matter, so-called `dark energy’, and quantum gravity”). But that’s hardly the only important problem where string theory has something to contribute. String theory has made a number of important hard problems (in non-perturbative gauge theory, for instance) much easier to solve; it has helped address several long-standing conceptual puzzles in theoretical physics; and it has inspired many new ideas that have had application well outside of string theory. Consequently it has value, perhaps less than its proponents may claim, but certainly more than asserted by its detractors.

Indeed, the most extreme critics of string theory, who would perhaps argue that string theory should have been long ago cast out of theoretical physics, as a mathematical construct with no value for science, are in an increasingly untenable position. Would it really have been a good thing for high-energy physics at the LHC if no physicists had ever worked on twistor strings? This is not the only tough question an absolutist critic would have to answer.

Yet the detractors’ complaints have some merit. Personally, I feel string theory’s possible application to “everything” has been wildly over-promoted; for this purpose, string theory cannot be tested at present, and that situation might continue for a very long time, perhaps centuries. Meanwhile we have too many string theorists teaching at the top U.S. universities, and not enough theorists doing other aspects of high-energy physics, including Standard Model predictions, such as carried out by the BlackHat folks. As a result, far too few particle physics theorists were trained at top universities in the U.S. in recent years, and our theoretical LHC research is now spread very thin. But does this mean that string theory is a total waste of time or that Witten’s work is undeserving of high praise and recognition? I’ve given you just one of many reasons (and not by any means the strongest one) why the answer is clearly no; perhaps I’ll give you other reasons in future articles. (Assuming I survive to write them.)

I have found the war over string theory a very ugly thing to watch, and it’s been constantly unpleasant and professionally very damaging to be stuck in the cross-fire, as I’ve been for well over twenty years. Although both the virulently pro-string and anti-string camps have important and intellectually honest points to make, it seems to me that it’s time for both of them to accept a United Nations-monitored cease-fire. While they’ve been carrying out a destructive war on the field of battle, and fighting for public sympathy, an apolitical and pragmatic process has long been underway, often unseen and unsung, in which a certain peace and mutual appreciation has been established, leaving the belligerents on both sides out of date and out of step with reality. String theory is an essential tool in the toolbox of the theoretical physicist, and it’s here to stay — not because it’s necessarily the theory of “everything,” but because it has proven over the decades to be profoundly and broadly useful.

So on many levels I view it as inappropriate to criticize the Milner prize for Witten on the grounds that Witten’s work on string theory can’t be tested; it is not unusual for critically important theoretical work to lie more than one step away from experiment. Still, shouldn’t those who take those steps, such as Britto, Cachazo and Feng, and the BlackHat folks, deserve more credit than they’re getting? It’s clear that the practical benefit for high-energy physics would have been far greater if Milner had given three million dollars to the BlackHat collaboration, and to others pursuing similar aims. Is Milner reading this? Or maybe someone else with a deep pocket, and perhaps a greater commitment to the actual process of listening to what nature has to say? Ensuring that every drop of information is squeezed from the LHC’s data is arguably the highest priority in high-energy physics right now, and doing so is difficult and personnel-intensive. Private funding supporting the research of those who do the most important calculations could really make a difference.

132 Responses

It was a happy day when I began discovering the various physics blogs written by real physicists talking about real physics. (I was especially delighted to discover this site!)

It was an awful day when I began discovering that these physicists, these scientists trained in logic and rationality, were acting like poorly trained children arguing over ice cream. Mortifying! I was ashamed on behalf of science. And humanity.

If people with this level of education and training in thought and analysis can fall prey to such infantile behavior, what chance is there for the rest of the world to get it together? What an awful, awful example to set.

The sad reality of human existence is that high intelligence does not correlate with high levels of maturity, politeness, wisdom or kindness. In fact it often correlates with arrogance and self-assuredness, neither of which contributes to useful conversations. I speak from personal experience; I know my own limitations. In any case: scientists are just as human as everyone else, some of them are hot-heads, and some don’t listen to or respect other people.

Blogging, specifically, attracts hot-heads… some of whom are well-meaning and have scientific integrity, others of whom have an axe to grind… and the reader has to beware.

It’s still much, much better than in a lot of other subjects. And it’s a lot better than it used to be. Go read what scientists used to say about each other 80 years ago… Generally, people in this field DO respect each other’s opinions even if they disagree. Generally. Not always.

That’s a good point, that the blogsphere is not likely to be representative of the whole. Definitely a skewed sample! 🙂

I would agree that high intelligence often leads to confidence (or even arrogance), but I think those qualities can exist withOUT the immature and rude behaviors. There are arrogant adults (many whom may have earned the right to some of that arrogance), and there are arrogant children.

Heated debate can be a wonderful, useful thing, but I wonder if modern society disdains polite interaction more than it ought.

As a general comment on modern society, I agree with your view. But again, look at how American politics was carried out in the 19th century. Things have gotten a bit worse recently, but they used to be a LOT worse. Still, it would be nice to see them get better again.

Indeed. I have a gut suspicion that the interweb is a game-changer due to its scope. Never in the history of the world have so many been so connected. McLuhan’s “global village” has been with us for some time, now, and I think it’s changing humanity. Not sure if the change is ultimately Good, Bad or just Change, but I feel we are at a major social crossroads.

Matt: Bern et al are clearly very smart guys indeed but the Sci Am article is, typically, not informative in the end while the slides and their papers are impenetrable for beginners. Here’s a very accessible 2010 study of the basics of the unitarity method (the idea behind Blackhat) by Rogier Vlijm , who was then an undergraduate supervised by Eric Laenen at the University of Amsterdam.

http://www.science.uva.nl/onderwijs/thesis/centraal/files/f845765992.pdf

Rogier is clearly a very smart guy too.

Prof. Strassler,

I am in gross admiration of your very noble goals, and obvious time investments, developing this wonderful website. As I read these careful posts and expository articles you have written, I am speechless and overjoyed that such a resource exists for those like me, that are “fans” but only peripheral surveyors of the enormous complexity of QFT’s and standard model physics.

Reading some of these comments, and your responses to them, reinforces my impression of you as a tireless, fervent proponent of scientific knowledge/literacy even further. So much of the nonsense that is posted by obvious crackpots or just simply Science Channel/Pop-Science/Google news “experts” is loosely phrased gibberish even to me, but you STILL manage to respond with such sound incredulity… I am a professor of mathematical statistics, but I gave up teaching First year calculus/pre-calculus courses because I value what little hair I have left, and my patience was beginning to wear thin as I often wanted simply to say: “please… leave this course and never, ever come back.” You are undoubtedly one of the finest instructors your students could ever hope for, as you dignify nearly EVERY question that is posed to you, even the ones that are, a priori, ludicrous nonsense, with an elegant response.

Speaking of ludicrous nonsense, I do have a question of my own to any expert that can ex-posit to an amateur hobbyist. It was my impression that, by their very foundations “extending” basic quantum mechanics, String Theory and QFT’s are on quite a separate footing… QFT’s “start” their relativistic QM by “demoting” position to a label on operators, putting position and time on the same footing in the Klein-Gordon equation, and the set of of operators assigned to each point in space is a QF,. String theories instead promote time to operator status and the “world-sheet” mathematics ensue by including other parameters to the state-space model beyond just a particle’s proper-time.

If this interpretation of the foundational developments of these theories is correct, is there an intuitive explanation on how string theory and QFT’s are somehow “compatible” once the mathematics gets beyond the simple relativistic QM assumptions?

I suppose I am looking for a physics-y Dan Dennett to pop up here, and tell me that we can have both free-will AND determinism, and somehow we all just get along fine…

GD: while Prof is out of the room, here’s a hiss from the back row. The two approaches you describe would be ‘compatible’ if they yield the same spectra for bound states, the same cross-sections for scattering,

the same corrections for magnetic moments, the same chiral anomalies etc. etc. Heck, they might even both agree with data.

I weep for Matt reading all these comments. Hang in there…

Hi,

After reading all the comments and the very interesting article from Bern, Kosower and Dixon (now in german on ‘Spektrum der Wissenschaft’) the main point of this ground breaking work is not ‘only’ to develop a new computer program, but with this new method of calculating the QCD diagrams and in this case to help supergravity bringing back in front of particle physics. So Hawking with right in his idea that supergravity is not dead. My point to this discussion here is: Supergravity needs supersymmetry. Supergravity itself could be seen as a part of string theory (as a boundary like property of M-Theory). Prof. Strassler is right to highlight this wonderful work of Bern, Kosower and Dixon. But this work is a major step in understanding and calculating the stringy universe, not against string theory.

Matt and others,

My main concern with string theory is that it seems to make conflicting predictions for the same physical phenomena. Just to give a few examples.

1) Most string theorists insist that string theory does not violate equivalence principle. However in papers by Thibault Damour and Cliff Will they point out string theory contains dilaton fields which violate equivalence principle.

So it seems that string theory is consistent with both possibilities.

2) In Verlinde’s colloquium at Caltech last semester on entropic gravity(from what I hear, since I wasn’t present at that talk), he claimed entropic gravity follows from string theory and it explains MOND. OTOH almost all string theorists

believe that “particle” dark matter exists and follows from string theory.

so again string theory seems consistent with both dark matter and MOND.

3) In Petr Horava’s colloquium at Berkeley on Horava-Lifshitz gravity (which is archived on video), he mentioned that this is a consequence of string theory. OTOH I have heard that Witten thinks that this theory is wrong.

So again another contradition

Anyhow although I may be quoting (2) and (3) a bit out of context, my main point is string theory seems to be consistent with “any” observations

and even conflicting theories.

>> talk at the Orsay Higgs workshop

tried to follow you talk. Is H as “Composite” PNGB the same thing as little higgs ( or should that be little H now ). And little in this context means naturally light?

Instead of open or closed strings why not open or closed spheres? Hence, 3 dimensions would do fine and no need for more dimensions.

Tim: why should this be embarrassment? we cant predict weather, we cant predict the climate, and we cant predict how 8 particles will scatter into 10 other particles. Processes with a lot of things flying around are messy, and not understanding such complicated things is not uncommon in science. Physics is NOT about calculating most complicated processes out there, rather, it is about stating the laws with which such processes can be calculated.

Using these laws to calculate very complicated things can belong to complex systems/engineering/CS (depending on circumstances).

Of course another natural pursuit in physics is the study of the general consequences of the fundamental laws. Obviously 8->10 scattering does not belong here either. I am talking about *general consequences* for example, existence of anti-matter, confinement, turbulence etc. This is also something many physicists are happy to do (derive general consequences from states laws).

I didn’t say physics was only about calculating. I would say that physics is about explaining the phenomena we see in experiments, and certainly those explanations would involve the general consequences you describe.

But calculation is an important part of the understanding and validation of those explanations. As long as theorists cannot accurately calculate what happens for a proton, then to me there always has to be some small doubt that QCD really does provide a complete or correct description of the strong nuclear force.

First, the fact that calculation is hard and has room for improvement doesn’t say anything about a theory being complete or correct either way.

I don’t know about what you heard or haven’t heard, there have been enormous breakthroughs in QCD predictions of bound states, ie. calculating proton mass Ab-initio. See B-M-W Collab. paper here: http://uk.arxiv.org/abs/0906.3599v1

>> “Many processes that we observe occurring at the LHC are quite complicated, and the rates at which such processes take place often cannot be calculated, currently, to better than 50% precision or worse.”

I think this should be an embarrassment to all theorists. I appreciate the calculations are hard, but how does nature do it? Does God employ an army of angels calculating many loop Feynman diagrams every time protons collide?

It seems to me many theorists have taken the easy option and dream of other worlds with strings and branes and landscapes, where they are untroubled by the inconvenience of experimental data. Or maybe it’s just that mathematicians have taken over the shop.

So I do feel our understanding of fundamental Physics would most likely progress faster if the Milner money was given to the efforts of the folks trying to explain the real data coming out of the LHC.

From my point of view, Matt’s silence speaks tomes, just as much as his posts do.

If we all really care about Physics, let us all show our commitment to the science with sharp comments, informed by sound theories and proper facts.

Kind regards, Gastón E. Nusimovich

I find the whole conversation rather silly, where a personal jealousy appears to have replaced the reason. Would not be surprised if Milner wished he quietly wrote the cheques and gave them to people he thought deserved it most – in his eyes. He would have had every right to do so !

I end with note that comparing feat of creating Pythia with the feat of giving rigorous evidence for EM duality (Sen) or elucidating one of the building blocks of cosmology (Guth) is really preposterous.

We will now expose the real ignorant here. Name one conceptual advance by those people who wrote Pythia etc I will convert.

Reminder: unitarity cuts, on shell methods, soft limits, collinear limits, are all known for (many!) decades.

They were somewhat extended, but in most cases these extensions are not really rigorous even. And in any case there is nothing conceptually new here.

Hadronization models: very elaborate phenomenological models, we never know when they are going to work, not grounded in fundamental theory except for very old general theorems (factorization etc.) so I would not call improvements in this field conceptual, since the whole framework rests on mere guesses…

Jets: the fact that they are described in some limiting cases by CFTs is known for decades. You are just misguiding the readers here! See the refs in the relatively recent paper you mentioned.

Recent advances in jets: have nothing to do with Pythia, and also are not conceptual, they just come from a better study of the IR-safe observables of Sterman-Weinberg.

The construction of Pythia: combining a lot of known things and a lot of hard work and trial and error. Also many ways to get to end product that would do roughly the same thing. The end product is not unique, not earth-shaking, and not even ideal for the purposes it serves (I have worked with Pythia a few years rather intensively.)

Umesh,

There are no other physicists “like Edward Witten”, either among “calculational people” or “conceptual people”.

OK, no need to cherry pick one aspect of my previous comment. Now, though it’s sorta clear that Edward Witten is a cut above everyone, the real so to speak ‘meat’ is the fact that the rest of the awardees are comparably on par with him, or ‘in the same band’ if you wish. Of course you need no introduction to the conceptual depth of the ideas of many of those folks. You purposely seem to want to rake up an issue where there is none. Does this satisfy you?

No, you’re missing the point. The rest of the awardees are not on par with Witten, and arguing that Matt’s suggestions about who should get such a prize aren’t “like Witten” or “on par with Witten” makes no sense.

If Milner wanted to give a $3 million (or $27 million) prize to Witten for the kind of work he has done, there would still be some complaint that Witten doesn’t need the money so it would be better spent elsewhere, but no argument about the choice. One could easily come up with other disjoint lists for the rest of the eight, with the only difference between such a list and Milner’s that his have $3 million in their bank accounts. Once you decide you’re going to reward non-testable “conceptual” advances at a level below Witten’s where there are lots of possibilities to choose from, serious issues of what to prioritize arise.

You could probably come up with a list of about 20 names across physics that are on par with the other candidates at an achievement level, but probably not much more than that. There is very little debate about this choice in theorist circles, everyone of the names is a superstar. You could perhaps also include some physicists working in condensed matter, but then I suspect that will be rectified soon.

In fact part of the problem with this prize is that within ten years, it will be hard to replicate the achievements of the initial batch of recipients assuming its 6 or 7 names per year.

I completely agree.

Who you have in mind?!

You too stingy! Be nice! Plenty good people just in fields already honored. Some maybe done more pioneering work than the brilliant nine.

If exclude Nobel winners, just in `older’ generation there is Veneziano, Higgs, Englert, Kibble, Guralnik, Hagen, Green, Schwartz, Brink, Polyakov, Susskind, Zumino, Deser, Freedman, van Nieuwenhuizen, Ferrara, Georgi, Hawking, Penrose, Bekenstein, Starobinsky, Mukhanov, Steinhardt, etc. etc. See — not hard come up more than 20 good folks! And if include the around 50 generation, like the celebrated Black Hat peoples — then some more for sure. This is not include solid state/stat mech peoples — if include them many many more.

So, no need be snooty. What, you have a Nobel Prize or what?

Me thinks Milner Prize have many happy years and much fun choosing peoples almost as superstar as the first batch.

Since I am unable to find the ‘Reply’ button beneath your latest, I must content myself with posting here instead. You write:

“The rest of the awardees are not on par with Witten, and arguing that Matt’s suggestions about who should get such a prize aren’t “like Witten” or “on par with Witten” makes no sense.”

How are you even claiming that the rest of the awardees aren’t on par with Witten? Please, this is utter rubbish. One cannot even put a finger on what Witten has ‘extra’ which the other awardees ‘lack’. If I have to hazard my personal (and quite ill defined) opinion, I might put it down to sheer brain power. But achievements (read conceptual contributions, impact on research, papers etc) are quite indistinguishable, and possibly exceed Witten in some cases, like the original paper on duality by Sen (which, by Witten’s own admission was one of the seminal ingredients for the M-theory conjecture), and am sure one can look at other examples. Maldacena’s AdS/CFT is one of the most important conceptual advances in the whole of quantum gravity in the past 15 years. Are you trying to suggest that any of these are ‘below par’ in any sense of the word?

As far as Prof. Strassler and his comments are concerned, it’s simply atrocious to suggest that ‘calculational people’ (BlackHat etc) have to be put on par the same level as Milner Prize winners. It’s as good as claiming that some random guy who has calculated the anomalous dimension of a certain operator to 4-loops deserves the same level of consideration as Einstein. There simply exists a hierarchy and will always. This choice is not made by any human agency, it’s made by natural selection. There’s a reason there’re only 8 permanent faculty who sit at the IAS.

You’re comfortable with agreeing that Einstein as an overarching figure in physics, above most of others. At the same time, when one suggests that this is true even today, and picks a bunch who are ‘the most overarching in terms of contributions in the past 30-40 years’ you immediately seem to have an issue. Hypocrisy much?

Hi Umesh,

I agree with you but maybe you (and everybody else) should consider this wise piece of advice … ;-):

http://community.us.playstation.com/t5/image/serverpage/image-id/24591iF9BE7432FE53C47F/image-size/medium?v=mpbl-1&px=-1

This discussion highlights that modern physics has moved from its base of trying to understand the natural world to one of pure computational morass. If someone is a leading expert in computing higher order corrections of some particle interaction, good on them, but what makes that person any more important than a good programmer who can “non-perturbatively” integrate across multiple systems with different sets of coding languages? Or, financial wizard that can build a program that provides the probability of contract execution between different businesses? Not a whole lot from a pure intellectual exercise. What differentiates the recipients of the Milner prize from other potential candidates is a leadership quality in terms of their understanding of the physical world and their intellectual and internal fortitude to make and justify statements that represent departures from most physicists comfort zones. There is a deep understanding that the concept of mathematical consistency can allow for pushing the limits of what we know. Do we chastise Newton for being wrong about so much? Certainly it is general insights and the formation of the ideas that Newton provided that makes him iconic. We now know Newtonian gravity is flawed, just as we now know that Einstein’s gravity is flawed, but yet both are recognized as being individuals who changed the way the world thinks and not really for their pure intellectual skills (although, usually one associates the former with the latter). Any objective assessment of Einstein’s work as compared to his contemporaries reveals nothing spectacular in computational competence, but just shear confidence in their intuition. I would argue that in the case of string theory, the jury’s not out about its importance to physics, but it is out on world’s ability to contextualize it.

How many dimensions has the slighttest of fluctuations?

This is really interesting and fundamental question, how to define the dimensionality of observable objects. It’s question for geometers. What we already know is, the empty free flat space is just three-dimensional. If we can see something in it (even the subtlest lensing), it cannot be three-dimensional anymore (and the first additional dimension belongs to time dimension according to relativity). But even such a subtle fluctuation (a let say Gaussian blob) hides an infinite number of higher derivations in itself. From practical purposes it would be necessary to define effective dimensionality of objects or something similar.

I know it’s a waste of my time to say this, but these comments don’t mean anything and I feel the need to point this out.

‘How many dimensions has the slighttest of fluctuations?’

I cannot make sense of this statement. First off in one short sentence you have both a misspelling and a verb conjugation error–I am immediately less likely to trust you when I see you haven’t proofread a 1 sentence comment. But as for the physics: fluctuations of what? Of the metric or some bulk field? Of standard model fields? Also what are you looking for as an answer to this question? We have observed exactly 3 spatial dimensions and 1 time dimension, so obviously any number other than than 4 would be part of speculative beyond standard model physics. And in that case the number of dimensions depends on the model you are considering, and on what kinds of field fluctuations you are interested in.

” If we can see something in it (even the subtlest lensing), it cannot be three-dimensional anymore”

Why not? You could presumably see me if we were in the same physical space, and I’m pretty confident of my own three-dimensionality.

/*@Andrew ..fluctuation of what..??*/

It doesn’t matter here. If you can detect some minute gravitational lensing or just one photon of CMBR radiation, then it’s evident, the space is not three-dimensional anymore. Only flat 3D space mediates the light in invariant speed. If we can see some lensing, then it’s evident, that this speed is not invariant anymore, because some refraction has taken place.

Disclaimer: Yes, I’m fully aware of fact, that the general relativity theory considers such an anomaly as a space curvature, not the sign of extradimensions. I’m aware, that from geometric perspective these objects are still just 3D objects, but this perspective is not consistent.

Professor Strassler,

I read with interest your point of view and I respectfully disagree.

While, others have made significant progress in string theory and phenomenology, I think Milner made a very informed choice. One could argue endlessly about all the people(and projects) who did not get the high profile prizes, e.g Freeman Dyson, Rosalind Franklin or Gandhi to name a few. Of course, there are only finite number of prizes and there is bound to be some notable omissions.

Also, different people may have a different point of view on what or whose work is more important. Sure, the contributions of BCF is quite important and in a world where science is more appreciated, they deserve significant recognition too. However, Milner’s emphasis was on a “body of work”

and it seems to me to be an excellent criteria for an award. Surely, no sane person would argue that the body of work of B,C, or F is larger than Maldacena, Guth, Sen etc.

Also finally, there is no apriori reason that all the awards in the world should be based on experimental contacts. I think raising the profile of fundamental physics and honoring some of the important physicists is an incredibly worthy project. Afterall, there are no laws of physics that says that all physicists have to be poor. For this Milner should be lauded rather than be criticized for things that he was not able to do.

/*…but Kaluza, Klein, Einstein, .. ArkaniHamed, Dimopoulos Dvali paper, delberger, Alan Kostelecky is one of the world’s leading experts..*/

This is not famous naming contest. With full respect to You and all these ultrasmart guys, we should focus to problem from its fundamental physical and geometrical perspective. The complex thinking is rather obstacle of further progress here.

/* .. I don’t know where you got these mistaken ideas.. */

Dense aether theory models 4D space-time foam with water surface, the 2D water surface serves as an analogy of 3D space, the remaining direction is the analogy of time dimension. The transverse waves are serving as an analogy of light waves, they’re spreading in background independent way, i.e. in relativistic Lorentz invariant way just and only at the case, when they don’t interact with anything from underwater, i.e. with anything from third dimension. So we can see clearly with this analogy, that the Lorentz invariance is geometrically inconsistent with concept of extra-dimensions. Now please accept my apology, as I cannot follow the further discussion here during weekend.

“This is not famous naming contest.”

But have you read the papers?

/* .. but Kaluza, Klein, Einstein, and the generations of physicists who have considered this possibility are not a bunch of idiots.. */

This is not scientific argument. My non-scientific argument is, these generations of physicists are indeed bunch of idiots, because the Lorentz violation is observable at the case of every gravitational lensing and every force, which is violating inverse square law is the evidence of extradimensions (Cassimir force, dipole forces, Van derWaals forces, brute force..) – but it’s not considered a scientific argument… yet. But it’s still logical argument.

Read the ArkaniHamed, Dimopoulos and Dvali paper, and the experimental papers by Adelberger et al., carefully. You will see your statements are wrong. I am making no claims here about *theory* — I personally don’t care whether string theory is right or wrong — but I do care very much that you not mislead my readers about the facts. And the fact is that from *experiment*, there are no limits on extra dimensions involving ordinary matter (electrons, photons, etcetera) below about 10^-18 meters, and involving gravitons below about 10^-6 meters. But don’t take my word for it. Alan Kostelecky is one of the world’s leading experts on violations of Lorentz Invariance; he’s written dozens of papers and review articles on the subject. Or Andy Cohen at Boston University. Ask them if extra dimensions smaller than the distances I just mentioned lead to measurable Lorentz Invariance violation. I don’t know where you got these mistaken ideas, but these are very easy things to check with a simple pad of paper and a wave equation.

/* extra dimensions do not, contrary to zephirawt’s statement, lead to Lorentz violation */

Try to object it, after then

“In theories with extra dimensions it is well known that the Lorentz invariance of the $D=4+n$-dimensional spacetime is lost due to the compactified nature of the $n$ dimensions leaving invariance only in 4d”.

http://arxiv.org/abs/hep-ph/0506056

I’d like hear your opinion in this matter…

You are completely missing the point. Measurements done at long distances compared to the size of the extra dimensions will show no measurably-large violations of Lorentz invariance. There may not be any extra dimensions in nature, but Kaluza, Klein, Einstein, and the generations of physicists who have considered this possibility are not a bunch of idiots.

Professor Strassler has spoken quite clearly & @ length about all these issues, and he has been fair and balanced in his treatment of all theories under discussion in this thread (so, let´s cut the crap, will ya?)

Factual sciences like Physics do operate with rules and regulations that are not in line with the rules of things like democracies, where the latter care about the opinions of the majorities (or the opinions of the ruling faction!).

Factual sciences only care about the actual facts!

That being said, let’s get back to the facts, and the theories that are strongly supported by them!

/* extra dimensions do not, contrary to zephirawt’s statement, lead to Lorentz violation */

Not necessarily but after then they would remain unobservable. Look, I’m just explaining, why string theory leads into fuzzy landscape of solutions instead of single testable predictions, which is already accepted fact even with most of string theorists.

/* it already has made testable predictions, in the case where there are strings and extra dimensions as large as 10^-18 centimeters in size */

In string theory some of these extradimensions should be apparent even at micrometer or millimetre scale (actually you can choose whatever distance scale you want, it will already fit some ST solution). The experimental tests failed many times. http://www.scientificamerican.com/article.cfm?id=string-theorys-extra-dime

“In string theory some of these extra dimensions should be apparent even at micrometer or millimeter scale”. False. Simply false. How can you possibly make such a statement? Defend it scientifically.

“Theorists believe that these extra dimensions are curled up into small spaces, and it has been suggested that they may generate forces with strengths comparable to gravity over distances of about 0.1 mm.”

http://physicsworld.com/cws/article/news/2003/feb/27/no-sign-yet-of-extra-dimensions

peer-reviewed source http://www.nature.com/nature/links/030227/030227-6.html

It’s one hundred of microns, man…

Again, you are misinterpreting. First, your statement about hundreds of microns ruling out extra dimensions would have been wrong in 1998. That is why the Arkani-Hamed, Dimpopoulos, and Dvali paper was written – to point out that no experiment rules out this possibility. Today, thanks to great work by experimenters, we jnow that extra dimensions of this particular class much be smaller than a few microns (I don’t have the number). Smaller than that? There is NO experimental bound.

Second, not even the theorists who were behind this work were saying that string theory (or any other theory) predicted extra dimensions MUST be so large. Only that experimentally, back in 1998, they *could* be so large. And now we know they must be smaller… but atomicsize would be fine, as far as experiment is concerned. Maybe you ought to read my many articles about extra dimensions on this website; you will see the focus is on what we do and don’t know experimentally, not on what theorists do or don’t think.-

Matt, thanks a lot for the swift and concise answer.

Now, regarding extra-dimensional theories, the usual argument against them that I have read about is that if they exist in nature, experimental data of certain physical magnitudes would not match the values of predictions by current (non extra-dimensional) theories, as part of the value of a given magnitude would “leak into” an undetected dimension and we would observe “missing” data.

Is this what dimension compaction in String Theories tries to address? extra dimensions are so compacted and thus so small, that we are not able to meassure with enough precision the “leakage” of values that actually exist?

To me, such an answer does not seat well with Mach’s phenomenalism concept.

Could you please, Matt, elaborate a little bit how do you see this.

This is a very complicated story, because there are many different ways that extra dimensions could be present in nature. I’d have to go through all of the options very carefully and explain the pros and cons of each one. The argument you have read is not appropriate for many of those options. I have gone through a few cases in my Extra Dimensions articles, http://profmattstrassler.com/articles-and-posts/some-speculative-theoretical-ideas-for-the-lhc/extra-dimensions/ and the pages below that one, but I haven’t had time to complete them — partly because it is such a long and complicated story.

Furthermore, for each option, it usually doesn’t involve an argument about “yes they exist” or “no they don’t exist”. Experiment generally can only say “extra dimensions of a particular class cannot be larger than such-and-such a size.” That’s not “yes” or “no”; it’s merely “definitely not larger than this-big, but still possibly smaller than that.” And that’s always what experiment does for you. We all want yes-no answers, but science rarely provides such crisp knowledge.

It should be emphasized that it is not string theory which introduced the well-motivated idea of extra dimensions into physics; it was Kaluza and Klein, many decades ago, and then Einstein followed these ideas for many years in the later part of his life. And yes, Kaluza and Klein (and Einstein) had the idea that these extra dimensions were finite in extent (which is what string theorists call compactification.) A finite extra dimension leads to a prediction that for those fields that can extend into the extra dimension, which means the field’s corresponding particles can therefore travel in the extra dimension, the field’s particles, from the point of view of the dimensions we know, will also have partner particles, called Kaluza-Klein partners, that are heavy; the smaller the extent of the dimension, the heavier are the particles. http://profmattstrassler.com/articles-and-posts/some-speculative-theoretical-ideas-for-the-lhc/extra-dimensions/how-to-look-for-signs-of-extra-dimensions/

We’ve never observed Kaluza-Klein partners for any of the known particles, and that puts some constraints on how big extra dimensions can be. But there are subtleties in that remark, because some fields and their particles might be stuck to the side of an extra dimension, while others could travel freely, and only the latter would exhibit such partners. http://profmattstrassler.com/articles-and-posts/some-speculative-theoretical-ideas-for-the-lhc/extra-dimensions/how-to-look-for-signs-of-extra-dimensions/how-big-could-an-extra-dimension-be/

The one other crisp statement is that if there were huge extra dimensions that were just like the ones we know (i.e., “flat”), but we somehow were unable to see them because our bodies and photons were trapped in a three-dimensional subspace of a more-dimensional space — like an worm trapped between two panes of glass — then Newton’s law of gravity would be proportional to 1/(distance)^3, or 1/(distance)^4, or more generally 1/(distance)^(2+n), where n is the number of the very large extra dimensions. Since Newton’s law is 1/(distance)^2, we know that n=0; there are no huge flat extra dimensions that we just can’t (literally) detect directly with our senses or with any instruments made from ordinary matter. http://profmattstrassler.com/articles-and-posts/some-speculative-theoretical-ideas-for-the-lhc/extra-dimensions/how-to-look-for-signs-of-extra-dimensions/extra-dimensions-newtons-gravity/

Some of us in this discussion thread are just curious laypersons that care to know a little bit more about these fascinating subjects. As such, we are not very capable of understanding many of the aspects casually mentioned in the thread by others.

I wonder if Prof. Strassler, or any other could give us some “layperson-friendly” brief description of what is meant by “String theory can never lead into testable prediction”, as it is one of the themes under discussion (whether or not that is proper to say).

Kind regards, Gastón

Well, unfortunately the statement by zephirawt is simply false; “Lorentz symmetry” is Einstein’s special relativity, and extra dimensions do not, contrary to zephirawt’s statement, lead to Lorentz violation; that’s why Einstein spent many years considering extra dimensional theories as unified theories — his hoped for “theory of `everything’ ” — in the late part of his life.

And it is not true that string theory can “never” lead into testable predictions. In fact, it already has made testable predictions, in the case where there are strings and extra dimensions as large as 10^-18 centimeters in size. That’s not what most people were expecting from string theory, but it was possible in principle. Indeed my Rutgers colleague Scott Thomas recognized that looking for strings was one of the things that could be done very, very early in LHC data, and that’s why, if you look at http://arxiv.org/pdf/1010.0203v2.pdf page 3, you’ll see experimental data testing string theory, based on his predictions (which unfortunately are unpublished). The green dashed lines on the plot are string theory predictions; the fact that the data (dots) shows nothing similar falsifies the idea that there are strings as large as 10^-18 centimeters in size. So that idea — that there are strings of such a size — is wrong, but certainly not untestable. The problem is that the idea wasn’t that plausible in the first place, so most people don’t make a big deal out of it. But it does falsify the statement that all versions of string theory are ***in principle*** untestable.

What cannot be tested now (but certainly not “never”) is the more popular (but not necessarily correct, of course) idea that all the particles of nature are really strings, but that these strings are only 10^(-33) centimeters in size. That is far too small a size to be measured in an accelerator. If we are to observe these strings someday, directly or through some indirect effects, it will have to be with a method that no one has yet invented. But “never” is a long time, and I’m sure Newton didn’t imagine we’d someday have the internet.

String theory can never lead into testable prediction, because it considers extradimensions and Lorentz symmetry, whereas the extradimensions will manifest itself just with Lorentz symmetry violation. http://aetherwavetheory.blogspot.cz/2009/02/consistence-problem-of-string-theory.html

That’s simply false.

Why?

See the answer to the question just below yours. 1. Not only is string theory testable in principle, particular realizations of it (not very plausible, but certainly possible) have been tested at the LHC already. 2. And your statement that extra dimensions necessarily violate Lorentz symmetry is wrong; Kaluza and Klein, who introduced the possibility of extra dimensions many many decades ago (long before string theory) were not idiots, nor was Einstein when he built upon their work in his attempt to build a unified field theory over the last decades of his life. 3. Furthermore (though this is a point which often gets lost in the hullabaloo about string theory) string theory DOES predict that there must exist a huge number of massive particles, such as might arise in an extra dimensional theory, but it DOES NOT strictly predict that those particles must arise from geometrically meaningful extra dimensions. I’m not saying anything new or radical; there was a whole program of study in the early 1990s that people seem to forget to mention: see for example these lectures by Joe Lykken, http://arxiv.org/abs/hep-ph/9511456 . In these versions of string theory — still the same classic string theory that everyone from Ed Witten to Brian Greene loves dearly, and the one other people detest — the role of the extra dimensions is played by some other non-geometric effect, so there’s always something extraneous that you have to add in; but it is not something that has a nice geometric interpretation, so you can’t argue that somehow it’s the extra dimensions in string theory that are its downfall… that makes no sense.

Hello,

Prof. Strasslers point gots strong support of the scientific american article: http://www.scientificamerican.com/article.cfm?id=search-for-new-physics

Best regards,

Klaus

Matt, is there a prominent calculation of a fairly clean, `standard candle’ final state that Blackhat gets right but that is extremely messy with Feynman Diagrams?

Hmmm. I’m not sure what you’re really wanting here. I think probably the best answer is to look at the talk I referenced by Bern

http://www.int.washington.edu/talks/WorkShops/int_11_3/People/Bern_Z/Bern.pdf

and look at page 9, which shows a whole set of calculations, some of which [in blue] were done with Feynman diagrams (and they’re all messy, but people wade through them) and some [in red] with the unitarity-based methods of Bern, Kosower, Dunbar and Dixon. Then look at page 13, which shows the ones done specifically by BlackHat. [Each of these calculations takes a long while to put together.] Now the question is whether you view Z + 3 jets as a clean standard candle. I would say it is, if the Z decays to leptons; there’s not that much top background if you make some other requirements. But the most data is obtained for W + jets; see page 32 of Bern’s talk. There’s more in later pages worth reading.

The calculation of W + 4 jets and Z + 4 jets is the most complicated calculation so far, as far as I am aware. That makes BlackHat a current leader, though I’m not expert enough to know where the Feynman diagram folks are in their calculations right now.

Nice article, gives insight into the string theory “wars”.

However, one issue: the Scientific American article you’ve linked is behind a paywall.

Yep, nothing I can do about that.

Does BlackHat get the observed g-2 for the muon?

Is this a question you’re just pulling out of the sky, based on something you’ve read? Or are you in a position to understand, at an expert level, what it takes to calculate and measure g-2?

Yes I can calculate g-2 for a spin 1/2 fermion, at least to leading order, without looking in a book, and I understand how g-2 was originally measured for electrons. I don’t know how the measurement is made for a muon. So no, I’m no expert. Sorry to trouble you, it won’t happen again.

Sorry, I was just trying to understand what level I should answer you at. It’s hard to guess that sometimes from what people write. It’s much better if you provide me with more context, since I have a very diverse readership. A single line question could be serious or a joke, could be from an expert who already knows the answer and is testing me or could be from a novice who doesn’t understand the question, could hide an agenda, etc. I have no idea how to answer a comment like that. You have to give me more context, otherwise it is easy for me to misunderstand what you’re getting at.

If you are expert enough to calculate leading-order corrections in Quantum Field Theory, then you probably know that there are various subtleties in the muon’s g-2, and that there was a whole controversy about previous calculations, including a sign eerror, and that also there are non-perturbative contributions that have to be added in. So g-2 for the muon, at the current level of precision, is quite a messy story, and I’m not expert enough to update you on the situation. But I don’t believe the BlackHat authors are planning to get into that mess right now; they are working mainly on one-loop calculations with many particles in the final state, which are the most important for a diverse range of measurements at the LHC.

I was striving for brevity. Your gracious explanation is unreservedly accepted and your further comments are very interesting.

Having worked on both N=4 SYM and QCD calculations, I think that the benefit of string theory to QCD calculations is somewhat overhyped. While the BCF paper has been inspirational, the revolution came due to the Ossola, Papadopoulos, Pittau method. If one is honest, they deserve 80% of the credit for all the wonderful things that happened in calculating one-loop amplitudes. The OPP paper was based on another very beautiful paper which had done nothing to do with string theory, but involved a model builder, by del Aguila and Pittau.

Another forgotten aspect due to this hype, which is rather annoying for those of us thinking that most of the times inventing methods directly for QCD calculations is much more motivated rather working on N=4 SYM and string theory, is that progress in N=4 SYM in the last 10 years is also very much due to the calculation methods developed in the QCD side for multi-loop amplitudes as well as the excellent understanding of QCD factorization.

Luckily, the best string theorists appreciate very much these QCD contributions. Unfortunately, they are not always heard loudly. I think that there is room for everyone to work on what they are attracted to the most

and the field(s) would be much better off with a little bit less of arrogance from the string theory side.

Babis — thanks for your comments. Since you’re one of the world’s experts on this subject, I’m obviously not going to argue with your interpretation. And I do know that the OPP paper was a very important contribution.

My own understanding of the relative importance of different advances (BCF vs. OPP in particular) is shaped in part by my conversations with the BlackHat experts, and they tend to emphasize BCF a bit more than OPP in their talks and in their Scientific American article. I also checked with them to be sure that I was not misrepresenting them or their views before I put out the article. So it may be that you and they have slightly different views of the relative importance of BCF, or that I misrepresented their views by not emphasizing OPP’s role strongly enough; I’m not sure.

The point of my article, of course, was not to weigh the relative contributions, but to point out that string theory in this case made an indirect but non-trivial absolute contribution to something that actually matters to physics. In doing so I was trying, as I’m sure you understood, to chart some middle ground. I was careful not to say that BlackHat comes entirely or largely from string theory (and of course it does not). You can see that on the 2nd slide I quoted from Zvi’s talk: the BCF advance is mentioned on one line on the second slide, but there are several other lines, including one that emphasizes the OPP paper, and none of them involve string theory directly.

Of course one other thing I didn’t mention is that it was the recognition that string theory calculations [combined with what we now call twistor methods, and were then called spinor-helicity methods] could give simple(r) methods for computing QCD amplitudes that got Bern and Kosower, and then Dixon, into this business in the first place back in the 90s. (And of course their work in turn inspired my Ph.D. thesis, which is itself an application of string theory-like methods to field theory.)

I’m not sure I entirely understand precisely what you’re referring to in the second paragraph. If you don’t want to go into it here in detail, perhaps at some point you could send me an email explaining what you meant… no rush. In future I may turn to this subject as well, so I’d like to make sure I’m educated properly.

This was really an important post for me. I read the article in May’s Scientific American by chance and realized its importance. But you have presented such interesting information on the way the BlackHat group used Witten’s ideas about Twistor space. (I have downloaded his article but doubt that I will be able to understand it.)

Not working anything like full time in Physics, I barely have time to read your posts much less the articles I download as a result of reading them. I did read Woit’s book “Not Even Wrong” a couple of years ago and was biased as a result of that about String Theory and it’s lack of ability to predict testable results. However, the use of Witten’s string theory work to produce the BlackHat program really makes that idea dated.

I love it when someone says something calm, reasonable and level-headed about something that matters. It happens so rarely. Thanks for the great read.

Well said. Same from me.

Q1. Which is valid, a) if we are to duplicate the conditions at the Big Bang ( t = 0 ) in order the to “see” the fundamental field (“Z” particle) we will need to have all the energy at one point, zero space (zero time?), impossible, so we will never be able to “see” the characteristics of the initial field, or

b) the conservation laws could be a “hocus pocus” theory which although seems valid today may not have been in the infant universe. i.e. the physics may not be invariant.

Q2. Would a homogeneous universe, inflation, have field (s) with ripples or without ripples? Do fields create ripples or did ripples create fields which created more ripples which created more field and so on?

Matt states that “our theoretical LHC research is now spread very thin” – in the sense (as I understat him) that the LHC experiments produce a hugge amount of data, but there appear to be (too) few theorists to make sense of these data in terms of new theories / ideas. While I personally cannot judge the situation, for the moment I believe Matt´s statement be reasonable.

Educating new theorists, even if possible, is a matter of several years (or even decades for experienced scientists), so there is no short-term solution if this is the bottleneck.

So, isn´t it then more important to store as much of the LHC experimental data such that they can be used by a future generation of scientists? I am thinking of the “parking lot” for data that Matt described on another page a few days ago. “Parking” the data may not only be necessary because the computer power is currently not sufficient to process all the data in real-time, but also because the human power to analyze the data and draw conclusions still may need to be developed / expanded.

Well, unfortunately we don’t have that kind of time. If new phenomena are detectable in the data that are collected in 2015-2016, but nobody finds them until 2030, a decision may be taken meanwhile not to build another high-energy accelerator beyond the LHC. The potential loss of massive amounts of engineering knowledge and skilled employees in the intervening 15 years may make the next accelerator nearly impossible to build with the kind of reliability that a machine like the LHC requires. This kind of technology loss has happened before in history, and particle accelerators are very vulnerable to it right now. This is part of why I’m working full-time on LHC physics and have dropped all of my other research work.

“A pause from the usual stuff for a necessary reminder: please keep the comments of a non-personal nature. Talk about the science; if someone makes a mistake, say so, but don’t attack their personalities and intelligence.” I don’t think all the comments today meet this standard.

Sigh… perhaps you are right. I find it very hard to stay at a high standard when my views are so regularly being willfully distorted for someone else’s purposes. But I will keep trying.

I wasn’t just referring to your comments. There were others!

Yep, I often get too upset when I see bad things happen …

But it is Prof. Strassler s right and even his duty to defend himself if what he says and thinks gets that brutally distorted by others who do not know what fair play is … !

Dilaton, calm down. Bob is right. One has to keep focused on the content; that’s what integrity is about.

It’s not just about integrity. You are more likely to win the battle of ideas if you keep the personal stuff out of the discussion.

I think it is the same thing, in the end. Because scientific integrity requires that the discussion remain focused on the facts of the matter. If you have a really good scientific argument, you shouldn’t have to insult other people and twist the facts in order to make your point. Although I know this, still, I have my weaknesses, especially when I see others who I believe to be violating these very same principles of scientific integrity, and not only getting away with it but prospering. The level of unfairness that one has to accept as a necessary price of trying to set the record straight is very high, and I find it difficult to handle.

Since a real powerful referee who dishes out the red cards appropriately is missing, the bad guys who do not respect any fairness or scientific integrity will always get away with it and the number of people who even cheer them up seems to increase.

They are so much louder, stronger, more powerful, and forcefully determined to make themself widely heared and attain their destructive goals by any means that reasonable and balanced people, who try to keep up high (scientific) standards and calm things down to a middle ground, are just blown away by the brutality of the bad guys.

The biggest loser in such unfair games is science of course, which is a very very sad thing 🙁

But so may it be, it seems nothing can be done about it.

It’s of course just as bad in American political life, and surrounding the issue of climate change. And of course those are much more serious. But sadly similar. There’s nothing to do but keep to the high middle ground and hope the ocean doesn’t keep rising.

Yeah, it is not that important and maybe I should turn my attention and interest to something else than particle physics / cosmology / fundamental physics and the like. It is too painful to observe how it gets destroyed.

I have no hope that the ocean does not rise, things have gone way too far …

Now, now, it’s not THAT bad. Some parts of this field will certainly survive, and probably thrive, no matter what happens.

Very interesting article.

One comment. It’s not said directly, but there is an implication that the relative absence of Standard Model theory in the US is down to string theory, or rather political control by string theorists. However there has been a significant amount of phenomenology hiring for quite a while, and almost no string hiring for a few years. What is striking though is how little of the pheno hiring is SM theory – its almost all BSM, or dark matter, or model building. So I think the relative absence of SM theory in the US – which is almost total if you consider the `top’ universities – has deeper roots than just string theory.

Googling around, I found out that Milner studied theoretical physics at Moscow State University.

It even mentions that he was a candidate for a doctorate in particle physics, but I could not find whether or not he was awarded his PhD.

I could not find anything regarding postdoc studies either.

So, it turns out that Milner knows a lot more about particle physics than your average Joe, but he is not an expert on the subject matter.

I agree that it makes no sense to criticise Milner’s prize on the basis of it being awarded to string theory. It’s unfortunate that critics have taken this route, because it undermines the legitimate criticisms that can be levelled against the prize.

As it stands, would it be fair to characterise it as the award of a very large amount of money at the whim of a wealthy individual?

Milner may have more appreciation of physics than the average man in the street, and he may have more big cheese physics friends, but if the ultimate decision is the opinion of a lay individual who happens to have some cash, then that’s a problem. What anyone thinks of whoever ended up getting it is a side show. The more important issue is that it can’t be healthy for the scientific community to embrace a prize like this.

Perhaps the unfortunate nature of the side show is a reflection of that.

Dear Prof Strassler, I like it how you take out the ball in this article 🙂

What did you mean by saying that maybe you dont survive long enough to write another article ? I hope you are ok …

It is awsome how these new methods to calculate scattering amplitudes are successfully applied to obtain better results from the LHC.

check out Woit’s website today.

Aah, ok I can guess … :-/

Maybe I will scroll through but not look at it too detailed since I have sworn by myself to avoid looking at the dark side of the blogosphere. But I can still read your latest trigger article to calm down and make me happy again afterwards 🙂

Yes, as usual he’s distorting things and making himself look important — as though everything I write has to do specifically with him, rather than with those of my scientific colleagues who happen to share more of his views than I do.

Now I have seen enough over there 😉

Everybody can plainly see who is a serious and reasonable person from comparing this site and the other blog …

This nice article hopefully helps calm things down a little bit !

Maybe he feels picked because he has a bad conscience (without admitting it) and he should !!!

That seems very unlikely to me.

Yes, my comment was not quite appropriate …

I rather meant he felt urged to post a rant against you on his blog because he knows very well what he is doing (wrong?).

What is amusing is that when coming to comment here, Dr. Woit always pretends to be a completely innocent good fellow wrongly accused of doing bad things; he just wants to help and provide useful links etc … Your crocodile’s tears response was so accurate, it made me LOL 😀

BTW, Dr. Woit should accuse and rant against ME (and not you) because I brought the issue of his spreading wrong, misleading, and dishonest comments in Nature up here … 😛

I think you have a large amount of readers who like this site and your good work, they certainly see at a glimpse that YOU are the one who is a honest and serious scientist who does things right and NOT Dr. Woit … 😉

What’s wrong with the article on Woit’s site?

For ignorant impartial people like me, I think Woit’s site is balanced and fair just like his book, despite being titled “Not Even Wrong”. He even links to one string blog in particular, despite the author there having some sort of unbalanced hatred towards Mr Woit which only adds to my perception that the string community might be a little unhinged, desperate and comical even. To me, the article looks fair and presents you as someone who is fair and balanced and can see the whole picture.

The physics community is a dynamic social organism and will therefore automatically allocate resources to those disciplines that are more deserving than others for its progress, and not because of one book or blog someone has written, despite its effects.

“Woit’s site is balanced and fair just like his book”

LOL, is this a joke 😀 ?

It cant be serious …

Distortion, like beauty, is in the mind of the beholder. If I said anything more I would be certain this post is deleted.

I don’t delete posts unless they are mean-spirited, inaccurate on the science, or bothering my readers with incessant clutter. A statement like yours, with no discussion of who you are (i.e., what you think is distorted, why you think so, what your background is) does nothing for anyone.

Okay then. I am not an expert in any way on qft..many decades since studied it…so many decades that ST was only being whispered about when I was a graduate student. So, though a working physicist, I can only say what my perception as far as hep-th is: a healthy skepticism of anything beond the SM is indeed called for. If you wish to paint PW’s skepticism as “unhealthy” or in your words (perhaps I misunderstood) as “distorting”, then that is indeed your right..but I will view it as only your subjective opinion.

Not only am I not going to delete your question, I’m going to answer it in great detail; it’s an excellent question. And indeed, the very distortion I’m referring to relates to the fact that you got the impression (presumably from PW — certainly not from me!) that I myself have no skepticism in the matter — and that others of my colleagues are not skeptical of there being anything beyond the Standard Model accessible at the LHC.

I invite you to look around this website and find, anywhere, an indication that I do not have such a healthy skepticism. You will nowhere find me promoting a particular theory or point of view on the matter; I just describe the most popular theories, so that my readers can understand what they are. You can attend one of my public talks, or one of the colloquia I give at physics departments, where I always state very clearly that the naturalness problem is not necessarily a problem that must be solved by nature, just a puzzle for physicists. You can listen to what I had to say at the SEARCH workshop, where I stated very clearly that naturalness as a criterion for distrusting the Standard Model came into serious question in the late 90s, with the discovery of the cosmological constant, http://profmattstrassler.com/2012/05/04/search-workshop-panel-discussion-on-lhc-posted-online/ .

Moreover, as is emphasized by all my best theory colleagues whenever they give lectures to students, the Standard Model has huge advantages that none of the other theories have. I believe I quote my colleague Nima Arkani-Hamed correctly (or maybe it was me, or both of us) saying “The real problem is that all BSM theories suck.” BSM means “beyond-the-Standard-Model”. The Standard Model has no significant violation of baryon number or lepton number automatically; it automatically has no large flavor-changing neutral currents; and so on. Every popular BSM theory has a problem avoiding these things — every one. And every good and honest theorist knows this. In each case there are solutions, but they aren’t that pretty. It’s darn good reason for skepticism.

But you also have to understand just what a spectacular conundrum it is if the Standard Model is correct at LHC energies. Keep in mind that the Standard Model has more than a dozen parameters that just go in by hand (masses and interaction strengths) not to mention that there’s no explanation for the matter content — why three generations and not eight? why three forces and not six? why one Higgs and not seventeen? It doesn’t explain why there’s no violation of the CP symmetry in the strong interactions; it doesn’t explain the scale of neutrino masses; and it doesn’t explain why there’s dark matter and a cosmological constant. It’s certainly not a complete theory. So there’s certainly something more out there. We are all hoping the LHC will tell us something about these mysteries, but we all know it may not. And we say so in public, and to our students; no one is keeping secrets here.

And then there’s the naturalness “problem”, or “conundrum”, or “puzzle”. You can see how I addressed this in my talk at the Orsay Higgs workshop, http://indico2.lal.in2p3.fr/indico/conferenceDisplay.py?confId=1747; it’s a huge mystery, and there are many possibilities as to what it means. The point is that we have no examples (as far as I am aware) in nature of a spin-zero particle that interacts as strongly as the Higgs does and is also much lighter than the scale of any other new phenomena, just by accident. That includes many, many examples in condensed matter, which uses the same quantum field theory techniques and does not see this kind of thing happen on a regular basis. And so we are left wondering why the Standard Model is the unique theory in nature that has this property. It could be for many reasons, including ones we haven’t thought of. But we want to know what the right reason is, not guess it.