Since it’s been the main news story of the last week, perhaps it would be useful to do a quick summary of what the CMS and ATLAS experiments at the Large Hadron Collider [LHC] have been saying, over the past fifteen months, about their search for the process in which a Higgs particle is produced and decays to two photons.

Before we start, let me remind you that in statements about how uncertain a measurement is (and all measurements have some level of uncertainty — no knowledge is perfect), a “σ”, or “sigma”, is a statistical quantity called a “standard deviation”; a 5σ discrepancy from expectations is impressive, 3σ intriguing; but anything less than 2σ is very typical, and indicative merely of the usual coming and going of statistical flukes and fluctuations of real data around the truth. Note also that the look-elsewhere effect has to be accounted for; but usually a 5σ discrepancy without the look-elsewhere effect is enough to be convincing. And of course a discrepancy may mean either a discovery or a mistake; that’s why it is important that two experiments, not just one, see a similar discrepancy, since it is unlikely that both experiments would make the same mistake.

Ok: here are the results as they came in over time, all the way back to the inconclusive hints of 15 months ago.

December 2011:

- ATLAS (4.9 inverse fb of data at 7 TeV): excess 2.8σ (where 1.4σ would be expected for a SM Higgs); less than 2σ after accounting for “look-elsewhere effect”.

- CMS: (4.8 inverse fb of data at 7 TeV): excess just over 2σ (where 1.4σ would be expected for a SM Higgs); much less than 2σ after accounting for “look-elsewhere effect”.

July 2012:

- ATLAS: (reanalyzing the 7 TeV data and adding 5.9 inverse fb of data at 8 TeV): signal 4.5σ (where 2.4 was expected for a SM Higgs); 3.6σ after “look-elsewhere effect”; best estimate of size of signal divided by that for a SM Higgs: 1.9 ± 0.5 (about 1.8σ above the SM prediction)

- CMS (reanalyzing the 7 TeV data and adding 5.3 inverse fb of data at 8 TeV): signal 4.1σ (where 2.5 was expected for a SM Higgs); 3.2σ after “look-elsewhere effect”; best estimate of size of signal divided by that for a SM Higgs: 1.6 ± 0.4 (about 1.5σ above the SM prediction)

November/December 2012:

- ATLAS: (increasing the 8 TeV data to 13.0 inverse fb): signal 6.1σ (3.3 expected for SM Higgs); 5.4σ when look elsewhere is accounted for; best estimate of size of signal divided by that for a SM Higgs: 1.8 ± 0.4 (about 2σ above the SM prediction)

- CMS: No update

March 2013:

- ATLAS: (taking the full 7 and 8 TeV data sets): 7.4σ (4.1 expected for a SM Higgs); best estimate of size of signal divided by that for a SM Higgs: 1.65 ± 0.30 (slightly more than 2σ above the SM prediction)

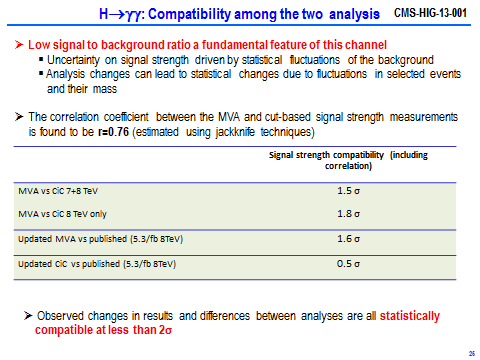

- CMS: (taking the full 7 and 8 TeV data sets) uses two different methods as a cross-check, one of them complex and (on average) more powerful, the other simpler but (on average) less powerful. For the best estimate of size of signal divided by that for a SM Higgs: one method gives 0.8 ± 0.3 and the other gives 1.1 ± 0.3. Both of these are within 1σ of the SM prediction and within 2σ of the CMS July result.

To understand how consistent the two new CMS results are with each other, you have to consider how the two studies are correlated (since they are selecting events for study from the same pile of data.) Because the two methods select and discard candidate events in two different ways, they don’t include the exact same data. CMS’s simulation studies indicate that about 50 percent of the background events and 80 percent of the signal events are common to the two studies. In the end, the conclusion (see the figure below) is that the two results are consistent at 1.5σ (and at 1.8 if one considers only the 8 TeV data) — in other words, reasonably consistent with one another.

You can also ask how consistent are the new results compared to the old ones from July. When you observe that the uncertainty on the July result was very large (1.6 ± 0.4 times the Standard Model prediction, i.e. a 25% uncertainty at 1σ, 50% uncertainty at 2σ) it should not surprise you that CMS claims that their new results are both consistent with the old ones at below the 2σ level.

Meanwhile, all of the ATLAS results are closely compatible with each other. This is more what one would naively expect, but not necessarily what actually happens in real data. Of course ATLAS’s results aren’t giving a consistent mass for the new particle yet, whereas CMS’s are doing so… well, this is what happens with real data, folks.

The real issue is whether ATLAS’s measurements and CMS’s measurements of the two photon rate are compatible with each other. Currently they are separated by at least 2σ and maybe as much as 3σ (a very rough estimate), which is not unheard of but is somewhat unusual. Well, whether the cause is an error or a statistical fluke or both, it unfortunately leaves us in a completely ambiguous situation. On the one hand, CMS’s results agree with the Standard Model prediction to within about 1σ. On the other hand, ATLAS’s results are in tension with the Standard Model prediction by a bit more than 2σ. We have no way to know which result is closer to the truth — especially when we recall that the uncertainty in the Standard Model prediction is itself about 20%. If ATLAS and CMS had both closely agreed with the Standard Model we’d be confident that any deviations from the Standard Model are too small to observe; if they both significantly disagreed in the same way, we’d be excited about the possibility that the Standard Model might be about to break down. But with the current results, we don’t know what to think.

So as far as the Higgs particle’s decays to two photons, we’ve gotten as much (or almost as much) information as we’re going to get for the moment; and we have no choice but to accept that the current situation is ambiguous and to wait for more data in 2015. Of course the Standard Model may break down sooner than 2015, for some other reason that the experimenters have yet to uncover in the 2011-2012 data. But the two-photon measurement won’t be the one to crack the armor of this amazing set of equations. (For those who got all excited last July; you were warned that the uncertainties were very large and the excess might well be ephemeral.)

11 Responses

There was a theory paper yesterday, http://arxiv.org/abs/1303.3590 . They attempt to estimate some of the N3LO corrections to Higgs production in gluon fusion. The claim is that the cross section might be increased by up to 17% on top of the NNLO result.

I don’t know how reliable that prediction is, but at the very least it is an indication for the size of higher order corrections.

This is one of the things to be concerned about, of course. How good are our calculations?

Of course, the discrepancy between ATLAS’s result and CMS’s result can’t be resolved by a shift in the theoretical calculation.

Is it possible that the universe is not expanding, absolutely, but rather oscillating and we live (time) in the expansion phase of the cycle?

How could the SM be valid if it cannot explain gravity? In other words, how could there be a zero value Higgs field with any account for the lesser force of gravity?

Composition must include gravity. What resonates the Higgs field?

Could we be measuring the shadows of the real stuff and not the real stuff itself?

The Standard Model can be valid but incomplete. It is valid for a very wide range of phenomena, those in which gravity plays no role. And it is consistent with classical Einsteinian gravity, just not with quantum gravity.

Matt, Not sure what you are saying here. The SM, and Quantum Mechanics in general, is fundamentally inconsistent with “classical” General Relativity — all of it! As soon as you have curved 4D space-time, it’s equations don’t make sense. It’s Special Relativity and its flat space-time (not “classicial Einsteinian gravity”) with which the SM is consistent. That said, it’s one of the conundrums we face as to why and how it works so well anyway. And it’s the common misunderstanding, from Newtonian physics, that “gravity” is due to “mass”, that confuses people into thinking that the Higgs and gravity must be closely related.

The statement is one of effective field theory; the Standard Model coupled to gravity works as an effective field theory as long as you understand what you can and can’t compute, as given by the rules of effective field theory. You can certainly compute how particles behave if classical gravitational fields are weak. It’s not true the equations don’t make sense at all; they just have well-understood limitations, and you’d better not try to calculate anything too precisely.

Professor, is there any substance to the “vanishing quasar at the LHC?

quasars are giant bright objects out in space; the LHC neither creates nor observes them. So I have no idea what you are referring to…

I was told quasar but he may was referring to a high energy gamma ray that just dissipated with scatter into other particles, but he certainly not referring to a micro black hole.

I suppose the question is not so much about an eleven dimension universe but is it possible for us, homo sapiens, to start the process of dissipation of mass. This is in reference to the fact the energy of the now valid Higgs boson is so close to the unstable vacuum region, could we extinguish the fire that made us?

Is it plausible that if the universe continues to expand it will not make “Island Universes” like DR Lawrence Krauss refers to, but indeed, the span between the galaxies will be so great that there will be regions of zero vacuum created and hence start the process of extinguishing the ripples.

Sorry for my pessimism so close to Easter … 🙂

Matt: “So as far as the Higgs particle’s decays to two photons, we’ve gotten as much (or almost as much) information as we’re going to get for the moment; and we have no choice but to accept that the current situation is ambiguous and to wait for more data in 2015. Of course the Standard Model may break down sooner than 2015, for some other reason that the experimenters have yet to uncover in the 2011-2012 data. But the two-photon measurement won’t be the one to crack the armor of this amazing set of equations. (For those who got all excited last July; you were warned that the uncertainties were very large and the excess might well be ephemeral.)

This is the fairest statement among all physics blogs. Most of them simply combine the two disjoint data set (almost disproving each other) to get a happy “1”, perfect in agreement with the Standard Model.