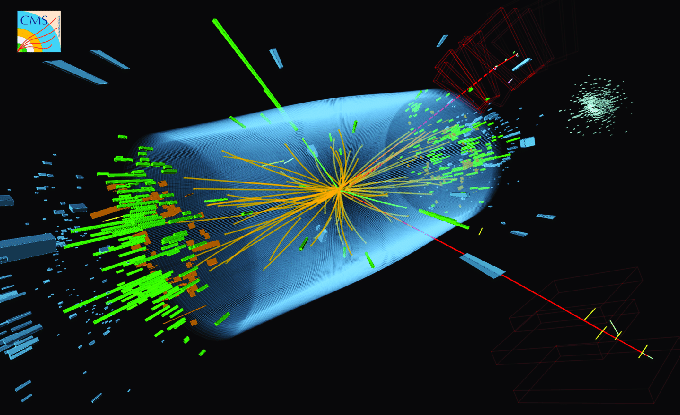

The Large Hadron Collider [LHC] is moving into a new era. Up to now the experimenters at ATLAS and CMS have mostly been either looking for the Higgs particle or looking for relatively large and spectacular signs of new phenomena. But with the Higgs particle apparently found, and with the rate at which data is being gathered beginning to level off, the LHC gradually is entering a new phase, in which dominant efforts will involve precision measurements of the Higgs particle’s properties and careful searches for new phenomena that generate only small and subtle effects. And this will be the story for the next couple of years.

The LHC began gathering data in 2010, so we’re well into year three of its operations. Let me remind you of the history — showing the year, the number of proton-proton collisions (“collisions” for short) generated (or estimated in future to be generated) for the ATLAS and CMS experiments, and the energy of each collision (`energy’, in units of TeV, for short)

- 2009: a small number of collisions at up to 2.2 TeV energy

- 2010: about 3.5 million million collisions at 7 TeV energy

- 2011: about 500 million million collisions at 7 TeV energy

- through 6/2012: about 600 million million collisions at 8 TeV energy

- all 2012: about 3,000 million million collisions at 8 TeV

- 2013-2014: shutdown for upgrades/repairs (though data analysis will continue)

- 2015-2018: at least 30,000 million million collisions at around 13 TeV

The number of collisions obtained at the LHCb experiment is somewhat smaller, as planned — and that experiment has been mostly aimed at precision measurements, like this one, from the beginning. There’s also the one-month-a-year program to collide large atomic nuclei, but I’ll describe that elsewhere.

Let’s look at what’s happened so far and what’s projected for the rest of the year. 2010 was a pilot run; only very spectacular phenomena would have shown up. 2011 was a huge jump in the number of collisions, by a factor of 150; it was this data that started really ruling out many possibilities for new particles and forces of various types, and that brought us the first hints of the Higgs particle. Roughly doubling that data set at a slightly higher energy per collision, as has been done so far in 2012, brought us convincing evidence of the Higgs in both the ATLAS and CMS experiments, enough for a discovery. The total data set may be tripled again before the end of the year.

In an experiment, rapid and easy discoveries can occur when there’s a qualitative change in what an experiment is doing.

- Turning it on and running it for the first time, as in 2010.

- Increasing the amount of data by a huge factor, as in 2011.

- Changing the energy by a lot, as in 2015.

But 2012 is different. The energy has increased only by 15%. The amount of data is going up by perhaps a factor of 6 (which in many cases improves the statistical significance of a measurement only by the square root of 6, or about a factor of 2.5 or so.)

Up until now, rapid and easy discoveries have been possible. Except for the Higgs search, which was a high precision search aimed at a known target (at the simplest type of Higgs particle [the “Standard Model Higgs”], or of a type that roughly resembles it), most of the other searches done so far at the LHC have been broad-brush searches, sort of analogous to quickly scanning a new scene with binoculars looking for something that’s obviously out of place. Not that anything done at the LHC is really easy, but it is fair to say that these are the easiest type of searches. You have a pretty good idea, in advance, of what you ought to see; you do your homework, in various ways, using both experimental data and theoretical prediction, to make sure in advance that you’re right about that; and then you look at the data, and see if anything large and unexpected turns up. If not, you can say that you excluded various possibilities for new particles or forces; if it does, you look at it carefully to decide whether you believe it is a statistical fluke or a problem with your experiment, and you try to find more evidence for it in other parts of your data. This type of technique has been used for most of the searches done so far at the LHC, and given how rapidly the data rates were increasing until recently, that was perfectly appropriate.

But nothing has yet turned up in those “easy” searches; the only thing that’s been found is a Higgs-like particle. And now, as we head into the latter half of 2012, we are not going to get so much more data that an easy discovery is likely (though it is still possible.) If an easy search of a particular sort has already been done, and if it hasn’t already revealed a hint of something a little bit out of place, then increasing the data by a factor of a few, while not significantly increasing the energy, isn’t likely to be enough for a convincing signal of something new to emerge in the data by the end of the year.

So instead, experimenters and theorists involved with the LHC are gearing up for more difficult searches. (Not that these haven’t been going on at all — searches for top squarks are tough, and these are well underway — but there haven’t been that many.) Instead of quickly scanning a scene for something amiss, we’re now in need of knowing the expected scene in detail, so that a careful study of the scene can allow us to recognize something which is just slightly out of place. To do this, we now need to know much more precisely what is supposed to be there.

In principle, what we expect to be there is predicted by the “Standard Model”, the equations that we use to predict the behavior of all the known elementary particles and non-gravitational forces, including a Higgs particle of the simplest type (which so far is consistent with existing data.) But getting a prediction to compare with real data isn’t easy or straightforward. The equations are completely known, but predictions are often needed with a precision at the 10% level or better, and that’s not easily obtained. Here are the basic problems:

- Calculations of the basic collisions of quarks, gluons and anti-quarks with precision of much better than 50% are technically difficult, and require considerable cleverness, finesse and sweat; for sufficiently complicated collisions, such calculations are often not yet possible.

- The prediction also requires an understanding of how quarks, gluons and anti-quarks are distributed within the proton; our imprecise knowledge (which comes from previous experiments’ data, not from theoretical calculation), often limits the overall precision on the prediction of what the LHC will observe.

- The predictions need to take account of the complexities of real LHC detectors, which have complicated shapes and many imperfections that the experimenters have to correct for.

In other words, the Standard Model, as a set of equations, is very easy to write down, but reality requires suffering through the complexities of real experimental detectors, the messiness of protons, and the hard work required to get a precise prediction for the basic underlying physical process.

And so as we move through the rest of 2012 and into the data-analysis period of 2013-2014, the real key to progress will be combining predictions from the Standard Model, and measurements that check that these predictions have been made with accuracy as well as precision, to allow the carrying out of many searches for particles or forces that generate small and/or subtle signals. It’s the next phase of the LHC program: careful study of the Higgs particle (checking both what it is expected to do and what it is not expected to do) and methodical searching through the data for signs of something that violates the predictions of the Standard Model. We’ll go back to easy-search mode after the 2013-2014 shutdown, when the LHC turns on at higher collision energy. But until then, the hard work of highly precise measurements will increasingly be the focus.

25 Responses

Regarding how far can this boson deviate from the SMH and still be called a Higgs boson, I would say it has to deliver the Higgs mechanism. The postulated Higgs is unique not because it is a heavy new boson, but because it has a non zero (and in fact extremely large – at the scale of the weak interaction) vacuum expectation energy – or loosely speaking density in the vacuum. I believe the only way this can be shown is if its production/decay cross sections, at least to other bosons, are in accord with the Higgs mechanism.

Hi,

Thanks for the continuing interesting blog posts!

I have a couple of questions and would greatly appreciate it if you could read them (my apologies if you’ve already answered them elsewhere – I have a problem with not paying attention – and you’ve already answered them to some extent above also)…

1) The LHC seems to have just managed to allow discovery of this 125 GeV Higgsesque particle in a targeted search using 7 TeV beamlines. What is the mass limit we can expect (i.e. highest mass that can cause a significant/detectable bump above the background) in searches for the lightest supersymmetric particle? 125 GeV? 1 TeV? 5 TeV? 7 TeV?

2) Suppose some cosmological discovery is made, or dark matter detection searches hit pay-dirt, or or an unexpected clue is gleaned from the Higgsesque particle. A convincing argument is made that there *must* be a lightest supersymmetric particle in the range 100-1000 GeV, and that this is super-important to the universe (e.g. makes up dark matter). What then is the best strategy for finding it? (i.e. what limits discovery & where does new funding go?) Ramp up the LHC to higher energies? More collisions? More computing power? Build a new synchrotron collider? Build a new linear collider? Theoretical advances to change it from the something-amiss search to a targeted search?

3) With all these millions upon millions of collisions at the LHC having to be sifted through at at least some level to find the Higgsesque particle, how on Earth were scientists thinking – with paltry early 1990s computing power – they were going to find the Higgs with the SSC?

Thanks!

1) supersymmetric particles may not appear as bumps at all; bumps only appear when all of a particle’s decay products can be observed, but if the lightest supersymmetric particle is itself undetectable (e.g., if it is the dark matter) then none of the supersymmetric particles will give you a bump in a plot similar to the Higgs bump. Strategies are more subtle than that. There isn’t a model-independent answer to your question because it depends what the lightest supersymmetric particle actually is; most of the time the lightest one isn’t the easiest to discover, because it’s the decay products as a heavier one decays to a lighter one that are easier to observe. So I’m afraid your question isn’t the one you ought to ask, and none of the questions you can ask are straightforward or have straightforward answers. I’d have to write a whole article about it.

2) The answer to your question is very sensitive to (a) what exactly is the mass (I would give a very different answer at 100 GeV than at 1000 GeV) and (b) what type of particle is it (what mixture of photino, zino and higgsino is it? is there an admixture with another particle?) and (c) are there any other superpartner particles accessible at the LHC? If the particle is light enough, or if other superpartner particles are light enough (e.g., gluinos below 3 TeV) the LHC may find it. If it is a bit heavier an electron-positron machine may find it. If it is too heavy neither will find it so a higher-energy version of he LHC or a higher-energy electron-positron machine would perhaps be necessary to find it. There’s no simple answer to these questions, and studies of these questions are still evolving as LHC data comes in and restricts the possibilities.

3) Because in 1980 you could calculate, using a combination of equations on paper and a simple computer program, the rate for two photons from the Standard Model Higgs, and you could calculate/estimate the production rate of two photons via Standard Model processes, and you could combine them to estimate how many collisions you would need in order to get Signal/Sqrt[Background] bigger than 5. You don’t have to do vast quantities of detailed simulations to do that. Computers weren’t that weak in the mid 1980s, and particle physicists could get access to powerful ones when needed.

Thanks very much (very interesting)!

Regarding the “weren’t that weak” remark… when it came out in the mid-eighties, the Cray 2 was the fastest computer in the world because it could do almost 2 GFLOPS. My little desktop PC probably gets close to 100, and that’s not even counting the GPU. The high-level triggers alone at the LHC use farms of *thousands* of modern PCs. So it is a little hard (for me, at least) to picture how the SSC would have worked. Would it just have thrown away a lot more data? Or recorded it more quickly?

Regarding Xezlec’s comment on the Cray 2 only being 2 gigaflops vis a vis LHC farms, I thought I would add in how another non-expert is interpreting things…

The way I interpreted the answer was that that specific process (the 2 photon decay) must have some sort of “property” that makes it “easier” to build a detector for just that decay, and that you can do a lot of the “computing” (or throw-away) just by building a detector just for that decay.

Alternatively the 2-photon decay may dominate (I guess I need to go through the Higgs articles and check). So you might only have to count the total number of collisions at 125 GeV and not do _any_ selection or whatnot, and so not need much computing power at all.

Of course I have no idea if I’m anywhere near the right track.

OK, Haydon, I’ll buy that, until the professor gets here to set us straight. 🙂

Are we floating on top of a “massive” iceberg, “dark matter”? The visible universe is merely that section of the frequency spectrum which we and our sensors can sense and measure. Maybe the bulk of the matter is “dark” because we cannot “see” it?

Indeed, energy (all energy, both dark and visible) is the alpha and omega of everything in the universe, everything is energy at different states, oscillations. We know that mass is energy at “trapped” (deterministic) oscillatory motions. We also know that these waves are either open (bosons) or closed (fermions).

And now, we have strong hints that a “light” Higgs does exist and does exist at a mass very close to the region of the unstable vacuum.

Wow … what is the first thing that pops in your mind when trying to grasping all this?

delta-E x delta-T ~ h … Energy – Time uncertainty

Yes, the time in this relationship is the time at which the state is unchanged, but it also indicates that time (absolute) can never be zero. (or else there will be nothing, no energy, nothing).

It indicates to me that the Big Bang was a transition point in absolute time, there was a space-time before the “release” of all this energy just as we see and live in this space-time. Maybe an hourglass with a very small neck (smaller than Planck’s scale) is best to describe our universe.

A cyclic universe does not need to violate the the second law of thermodynamics if entropy is a process that was created by the Big Bang and hence gets resets (the singularity absolves it and resets it to an arbitrary value) in the next cycle.

So my question is is dark energy and dark matter is the Big Bang reservoir of energy then could this indicate that the gravitational field (graviton) is indeed the fundamental field first to be produced?

I’d be curious to read more about how one predicts a proton-proton collision. For instance, how does the internal geometry come into play? Do you model the protons as actual structures colliding, so that perhaps some outer shell of quarks collides first, then some subsequent internal structures? My understanding is that fast-moving particles have a high position uncertainty, so wouldn’t that make them “blur out” their internal geometry? What makes it more difficult to model a quark collision than, say, an electron collision (I understand it must be because the quark interacts with the strong force, but in what ways does that make it harder)?

Thanks.

Unlike some previous and somewhat incorrect articles from NY Times, the editors surprised me… 🙂

http://www.nytimes.com/2012/07/14/opinion/weinberg-why-the-higgs-boson-matters.html?_r=2&pagewanted=all

Since LHC will run for 7 more weeks this year, giving us some 20 inverse femtobarns of data, perhaps all will be settled with respect to July 4 announcement .

Possible typo?

…detectors, othe messiness of protons…

Thanks; I don’t know how I introduced that one.

Matt, doe the fact that we now know the Higgs (or Higgs like particle) mass to around 1GeV precision mean that theorists can better filter (and/or trigger) to search for phenomena that previously could have occurred over too wide a range to be worth searching in? So even though the amount of data is going up by perhaps a factor of 6 before the long shutdown could the new physics so far found “boot strap” the rest of the run to pick out more than we could have expected without the Higgs discovery? Or is that question asking too much of your trusty”crystal ball”?

Knowing the Higgs mass and some rough guesses about its properties does help with certain searches for new physics, yes.

Don’t forget that the running of the LHC has been extended by two months which will likely mean more than 20/fb by the end of the year, rather than the 15/fb requested.

I included that in my numbers.

Matt, could you clear someting up for me? The number of probable Higgs decays detected in the various channels over years of colliding protons is pretty small in absolute terms. Is it thought that the collisions are actually producing more Higgs all the time but it is extremely difficult to detect the decays–like maybe the detector has a one-in-a-billion chance (or whatever it is) of actually spotting the traces? Or is it really that only one in a billion times those beams collide, a Higgs is produced? (Or is it some product of probabilities of production and detection that adds up to the very low numbers they’ve seen above background? In which case is the bottleneck thought to be more on the production side or the detection side?)

There is a bottleneck on both ends.

1 in 10 billion (i.e. 10,000,000,000) proton-proton collisions produces a Higgs particle.

Of these Higgs bosons, only two in 1000 decays to two photons, and less than one in 10,000 decays to two lepton-antilepton pairs. These are the ones you need for the easy searches… the ones that give you a bump in a plot over a smooth or small background.

There are harder searches for decays to leptons and neutrinos (which are more common but have more background), to tau pairs (again, more common but with more background) and to bottom quark pairs (very common, but impossible to observe unless the Higgs is produced along with a W or Z particle which in turn decays to at least one lepton, which only happens for 1 in 100 Higgs particles)

I think I covered most of this in the articles linked from here: http://profmattstrassler.com/articles-and-posts/the-higgs-particle/the-standard-model-higgs/

Thanks – and for each decay of the correct type that actually occurs, what are the chances thought to be that the detectors will catch the Higgs decay? I couldn’t find that at the links–maybe they pretty much always detect it when it happens?

For these Higgs decays, it tends to be something in the range of 10% – 80%. You miss some because they don’t fire the trigger, and others because the particles go into a part of the detector isn’t well-instrumented, or because by accident they end up buried in a random jet of hadrons from a random quark or gluon. They have to figure out these fractions with high precision if they want to do high precision measurements… which is one of many things that makes LHC experiments difficult.

Thanks! I had never seen that figure of 10-80% in all the articles I read about this. It makes me appreciate even more how difficult these measurements are if they have to figure out how often the decays can occur and yet not be spotted by the experimenters, for all those different reasons you mentioned. It really is an incredible, incredible feat.

One question that’s been bothering me is rarely addressed: How far can that 125 GeV boson ‘deviate’ from being that a pure Standard Model Higgs so that the field connected with it would still deliver the Higgs mechanism as envisioned in 1964? That is, could we reach a point in, say, 6 months, where we have significant evidence for ‘new physics’ beyond the SM – but with the SM itself in the same trouble again it was in 50 years ago …?

Your question doesn’t have an answer as posed, which is why it isn’t addressed directly. The answer hinges on what form the deviation takes, and what else might be present or not in the LHC data. For example, are you allowing for the possibility that there might be additional Higgs particles? Are you allowing for the Higgs might be a composite particle? Are you allowing for the possibility of additional, as yet unknown particles that interact with the Higgs? Unless you specify the problem more precisely, your question simply can’t be answered; if you consider all possibilities, then the Higgs particle could differ 100% from what is expected and still deliver the Higgs mechanism.