A reminder: tonight (April 3) at 6pm I’ll be giving a public lecture about my book, along with a Q&A in conversation with Greg Kestin, at Harvard University’s Science Center. It’s free, though they request an RSVP. More details are here. Please spread the word! (Next event in Pasadena, CA on April 10th.)

Tag: supersymmetry

I hope that a number of you will be able to see the total solar eclipse next Monday, April 8th. I have written about my adventures taking in a similar eclipse in 1999, an event which had a profound impact on me. Perhaps my experience might give you some things to think about that go well beyond the mere scientific.

Meanwhile, for those who can only see a partial solar eclipse that day, there’s still something really cool (and very poorly appreciated!) that you can do that cannot be done on an ordinary day! Namely, you can easily estimate the size of the Moon, and then quickly go on to estimate how far away it is. This is daylight astronomy at its best!

Side note for those in the Boston area: I’m speaking about my new book at Harvard on Wednesday April 3rd.

POSTED BY Matt Strassler

ON April 1, 2024

Subscribe to continue reading

Subscribe to get access to the rest of this post and other subscriber-only content.

POSTED BY Matt Strassler

ON April 1, 2024

I’m beginning a period of travel and public speaking, so new posts may be a bit limited for a time. (Meanwhile, explore this site’s other offerings!) Tomorrow, Thursday March 28th, I’ll be in Nashville, at Vanderbilt University’s department of physics and astronomy, giving a talk (at 4 pm) about the subjects covered in my recent book. Next, on Wednesday April 3rd (6 pm) I’ll be in Cambridge, Massachusetts, giving a public talk about the book at the Harvard Science Center, as organized by the Harvard Bookstore (the wonderful independent book store located right in Harvard Square.) [Free, but RSVP.]

Then I’ll be on the west coast for a couple of weeks; if you live out there, check out this site’s upcoming-events page. And if you can’t attend any of these events, you can always listen to the recent podcasts that I’ve been on, with Sean Carroll (here) and with Daniel Whiteson (part 1 and part 2.)

More physics coming soon!

POSTED BY Matt Strassler

ON March 27, 2024

I recently pointed out that there are unfamiliar types of standing waves that violate the rules of the standing waves that we most often encounter in life (typically through musical instruments, or when playing with ropes and Slinkys) and in school (typically in a first-year physics class.) I’ve given you some animations here and here, along with some verbal explanation, that show how the two types of standing waves behave.

Today I’ll show you what lies “under the hood” — and how you yourself could make these unfamiliar standing waves with a perfectly ordinary physical system. (Another example, along with the relevance of this whole subject to the Higgs field, is discussed in chapter 20 of the book.)

(more…)POSTED BY Matt Strassler

ON March 25, 2024

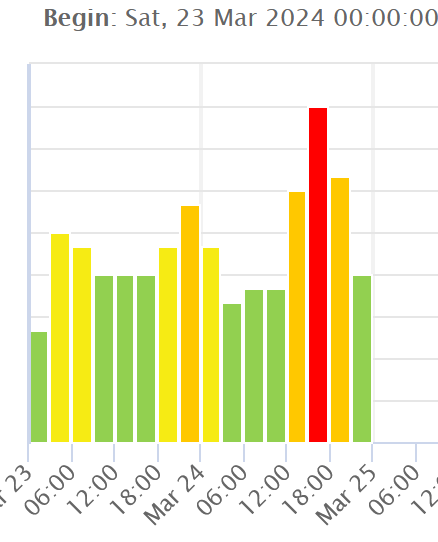

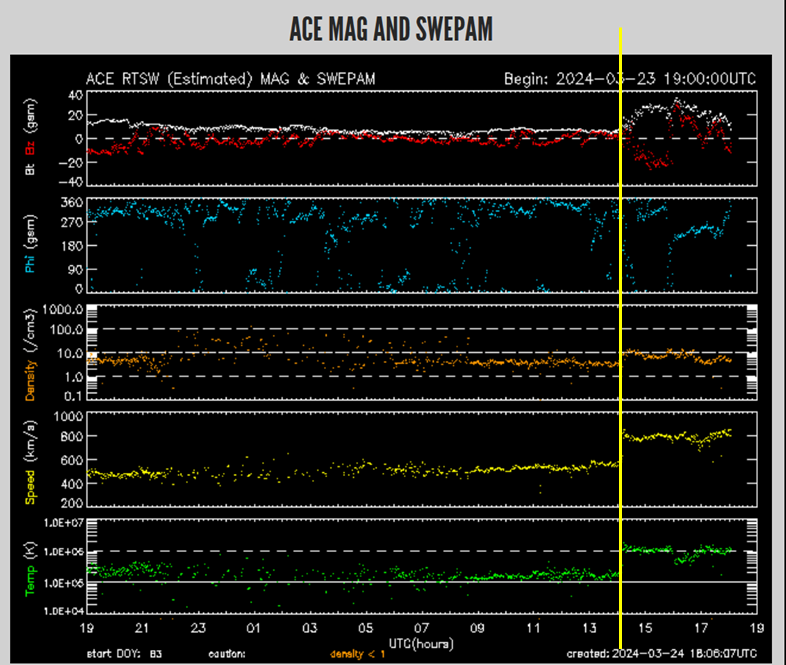

[Note Added: 9:30pm Eastern] Unfortunately this storm has consisted of a very bright spike of high activity and a very quick turnoff. It might restart, but it might not. Data below shows recorded activity in three-hour intervals — and the red or very high orange is where you’d want things to be for mid-latitude auroras.

Quick note: a powerful double solar flare from two groups of sunspots occurred on Friday. This in turn produced a significant blast of subatomic particles and magnetic field, called a Coronal Mass Ejection [CME], which headed in the direction of Earth. This CME arrived at Earth earlier than expected — a few hours ago — which also means it was probably stronger than expected, too. For those currently in darkness and close enough to the poles, it is probably generating strong auroras, also known as the Northern and Southern Lights.

No one knows how long this storm will continue, but check your skies tonight if you are in Europe especially, and possibly North America as well. The higher your latitude and the earlier your nightfall compared to now, the better your chances.

POSTED BY Matt Strassler

ON March 24, 2024

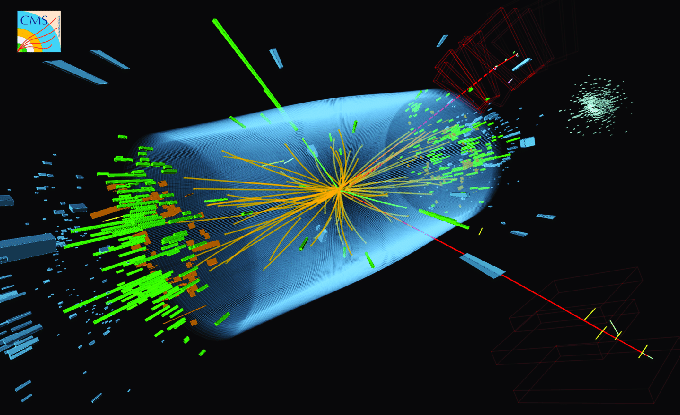

A decay of a Higgs boson, as reconstructed by the CMS experiment at the LHC